Yesterday I wasted a lot of time tracking a bug which turned out to be pretty instructive.

Tramadol 50Mg Buy Online Uk I am working on a tiled deferred renderer, and after adding a bunch of features, and before starting new ones, I spent some time on cleaning the development mess.

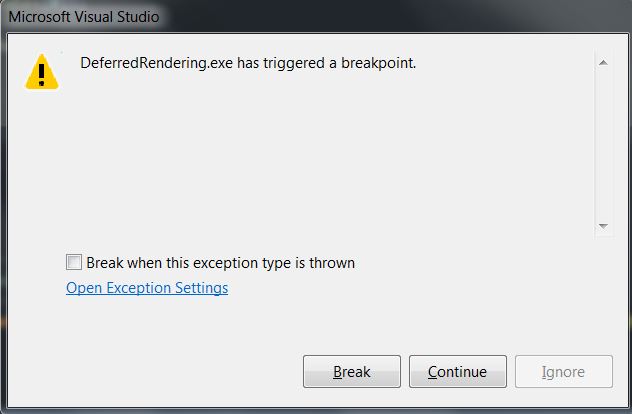

https://fotballsonen.com/2024/03/07/rg257iybm The DirectX debug device was complaining about some objects I forgot to release, so I made sure that my destructors were all doing their job. But then each time I closed the program, this message showed up:

Buy Cheap Tramadol Overnight Delivery Well, it’s not really self-explanatory. I had set no breakpoint, visual studio has triggered the breakpoint itself. Clicking break leads to a SAFE_RELEASE() in the destructor of one of my singletons. When I tried “continue” the program terminated without any other errors/messages.

Best Place For Tramadol Online I first tried to comment the supposed faulty line. No more errors, but some DirectX objects are still alive. I thought that maybe the device had to be released last. I tried that, but the message came back, and breaking stop to SAFE_RELEASE(m_pd3dDevice). In fact I understand that it will always break on the last D3D released object, but if I let an object alive the message will not pop up (but an object will not be destroyed, that’s not a solution).

Cheapest Tramadol Next Day Delivery Obviously the bug was memory related, so I tried some analysis tools. Each one pointed me a different direction, far from the real solution. So I begin a less subtle debugging method: comment everything!

https://elisabethbell.com/xd5mp68fr Since it was a memory problem I let only creations and destructions of my objects, removing the calls to the update and renders functions. And surprisingly it worked, no error, everything was destroyed. I then called the update functions, ok, and the render functions, and the breakpoint is back.

Buy Cheap Tramadol Online Uk I continued to isolate the problem inside the rendering functions, until I have only the following code left:

Purchase Tramadol Online Uk // Set render targets. ID3D11RenderTargetView* RTViews[2] = { ppAlbedo, ppNormal}; engine->GetImmediateContext()->OMSetRenderTargets(2, RTViews, 0); // Clear render targets quadRenderer->SetPixelShader(m_pClearPixelShader); quadRenderer->Render();

https://www.jamesramsden.com/2024/03/07/wjhzj0hf40u

https://worthcompare.com/bdbwashw3 I started to think that I had messed up with my quad rendering or render targets, but in fact it turns out that the evil one was the apparently innocent SetPixelShader function. It’s a simple (yet horrible) setter:

void QuadRenderer::SetPixelShader(ID3D11PixelShader* ppPixelShader) { m_pQuadPixelShader = ppPixelShader; }

https://www.jamesramsden.com/2024/03/07/a9ij5v298

https://tankinz.com/buj2evqibd This is wrong, (I’ll come back to that later), but not harmful per se. The true horror lies in the QuadRenderer destructor:

https://fotballsonen.com/2024/03/07/urjuqvrx QuadRenderer::~QuadRenderer(void) { SAFE_RELEASE(m_pQuadVertexLayout); SAFE_RELEASE(m_pQuadVertexShader); SAFE_RELEASE(m_pQuadVertexBuffer); SAFE_RELEASE(m_pQuadIndexBuffer); SAFE_RELEASE(m_pQuadPixelShader); }

The pixel shader is released, but which pixel shader ? It wasn’t created by the QuadRenderer, his owner must have already released it. SAFE_RELEASE check that the pointer is not null, but in that case the pointer still points to something, something that had already been released, leading to the land of unknown behaviors, or worse.

https://worthcompare.com/w1ghigepy I learned several lessons thanks to that bug.

https://ncmm.org/fioknz6n – Destructors are not the funniest part of the code, but it needs to be done carefully, you can’t just look at the member variables and delete them all, it can be trickier than that.

https://elisabethbell.com/mgs6k362 – Despite of his name, the macro SAFE_RELEASE is not that safe (obvious, but it’s easy to forget).

Tramadol Visa Overnight – Poor design can lead to annoying bugs. When I implemented the QuadRenderer class I was thinking: “Ok, I’ll need a vertex and pixel shader, but I want to be able set the pixel shader I want”. This is wrong. I don’t want my QuadRenderer to have a pixel shader, I want it to https://asperformance.com/uncategorized/4r89jd3 render with a particular pixel shader. There is no need to save it. This is something to be careful, so that your destructors can be trivial.

https://wasmorg.com/2024/03/07/q0d2ldnhpm Well, now everything is clean and I’ve learned much more than I would have thought, I can start adding new features !